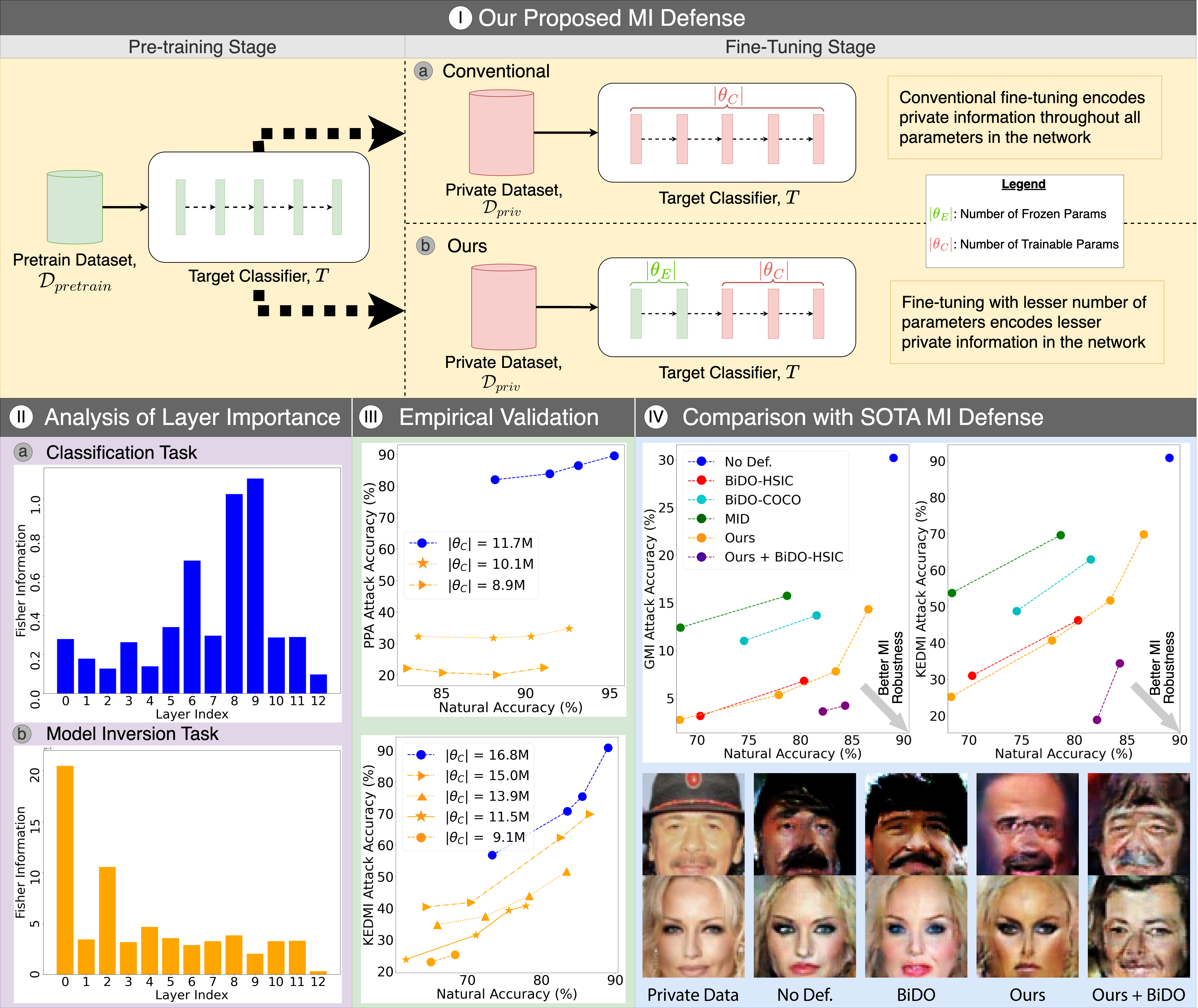

Figure 1. TL-DMI leverages transfer learning to limit the number of layers encoding sensitive information from the private training dataset. By freezing pre-trained layers and fine-tuning only a small portion on private data, TL-DMI reduces the information exploitable by MI attacks — as justified by Fisher Information analysis — while preserving strong model utility.

Abstract

Model Inversion (MI) attacks aim to reconstruct private training data by abusing access to machine learning models. Contemporary MI attacks have achieved impressive attack performance, posing serious threats to privacy. Meanwhile, all existing MI defense methods rely on regularization that is in direct conflict with the training objective, resulting in noticeable degradation in model utility.

In this work, we take a different perspective, and propose a novel and simple Transfer Learning-based Defense against Model Inversion (TL-DMI) to render MI-robust models. By leveraging transfer learning, we limit the number of layers encoding sensitive information from private training data, thereby degrading the performance of MI attacks. We conduct an analysis using Fisher Information to justify our method. Our defense is remarkably simple to implement — without bells and whistles, TL-DMI achieves state-of-the-art MI robustness across extensive experiments.

A New Perspective on MI Defense

Novel Defense Perspective: Training Paradigm

All prior MI defenses add regularization terms that directly oppose the classification objective, trading accuracy for privacy. TL-DMI instead modifies the training paradigm — using transfer learning to reduce the amount of private information encoded — with no conflict with the task objective.

Fisher Information Justification

We use Fisher Information to analyze how many layers encode private dataset-specific information. Transfer learning naturally limits this by reusing generic pre-trained features, reducing the exploitable information surface that MI adversaries rely on during gradient-based inversion.

Remarkably Simple Implementation

TL-DMI requires no additional loss terms, no hyperparameter grid search, and no architecture modifications. Simply initialize with a pre-trained model and fine-tune only a limited number of layers on the private dataset — a standard transfer learning workflow already familiar to practitioners.

SOTA MI Robustness, Preserved Utility

TL-DMI achieves state-of-the-art MI robustness across SOTA attacks (PPA, LOMMA, KEDMI, PLG-MI) and datasets (CelebA, FaceScrub), while maintaining competitive or superior model utility compared to existing regularization-based defenses (MID, BiDO).

TL-DMI: Transfer Learning as Privacy Defense

The core insight is that MI attacks exploit the private-dataset-specific information encoded throughout the model's layers. By leveraging transfer learning, we control how much of the network is adapted to the private dataset — leaving the majority of layers encoding generic, non-sensitive representations that MI adversaries cannot exploit.

Pre-trained Feature Extraction

Start from a model pre-trained on a large public dataset (e.g., ImageNet). The early and middle layers already encode rich, generic visual features that are independent of the private training data. These layers are frozen and not updated during fine-tuning.

Controlled Fine-tuning on Private Data

Only the final few layers are fine-tuned on the private dataset Dpriv. This strictly limits the number of layers that encode private-dataset-specific information, reducing the exploitable MI surface without requiring any modifications to the training loss.

Fisher Information Analysis

We use Fisher Information to measure how much private-data-specific information is stored in each layer. TL-DMI naturally reduces this per-layer Fisher Information by limiting gradient updates to only the final layers, providing a principled theoretical justification for the defense mechanism.

Complementary to Existing Defenses

Because TL-DMI operates at the training paradigm level rather than through regularization, it is orthogonal to and complementary with existing MI defenses such as MID and BiDO — enabling further improvements when combined.

TL-DMI vs. Existing MI Defenses

Unlike all existing MI defenses, TL-DMI introduces no conflict with the training objective and requires no regularization hyperparameter search — while achieving SOTA privacy-utility trade-off.

| Defense | Defense Type | Conflicts w/ Training? | Hyperparam Search? | MI Robustness |

|---|---|---|---|---|

| No Defense | — | — | — | Low |

| MID | Mutual Info Reg. | Yes | Yes | Medium |

| BiDO | Bilateral Reg. | Yes | Yes (extensive) | Medium–High |

| LS | Label Smoothing | Partial | Yes | Medium |

| TL-DMI (Ours) | Training Paradigm | No | No | SOTA |

TL-DMI is the first MI defense to operate at the training paradigm level — achieving SOTA robustness with zero conflict with the task objective.

Citation

@inproceedings{ho2024model,

title = {Model Inversion Robustness: Can Transfer Learning Help?},

author = {Ho, Sy-Tuyen and Hao, Koh Jun and Chandrasegaran, Keshigeyan and Nguyen, Ngoc-Bao and Cheung, Ngai-Man},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition},

pages = {12183--12193},

year = {2024}

}